Iceberg Quantum

Error correction that makes fault-tolerant quantum computers achievable

Take two large (very, very large) prime numbers, multiply them together, and you have the basis for RSA, the most successful cryptography scheme in human history and one that underpins the security of the internet and essentially all modern communication channels. First introduced by three MIT researchers in 1977, RSA’s security rests on the practical infeasibility of factoring those two primes from their hundreds-of-digits-long product even given all the classical computing resources in the world. But since 1994 it’s been known that RSA (and other widespread public key cryptography schemes) can be broken by a sufficiently scaled up quantum computer running the right quantum algorithm. Preparations for this eventuality, and the existential infosec crisis it would represent, have been in motion for a while now, but given that “sufficiently scaled-up” has been thought to mean a quantum computer with millions of physical qubits and robust error correction, the threat has remained comfortably on the horizon.

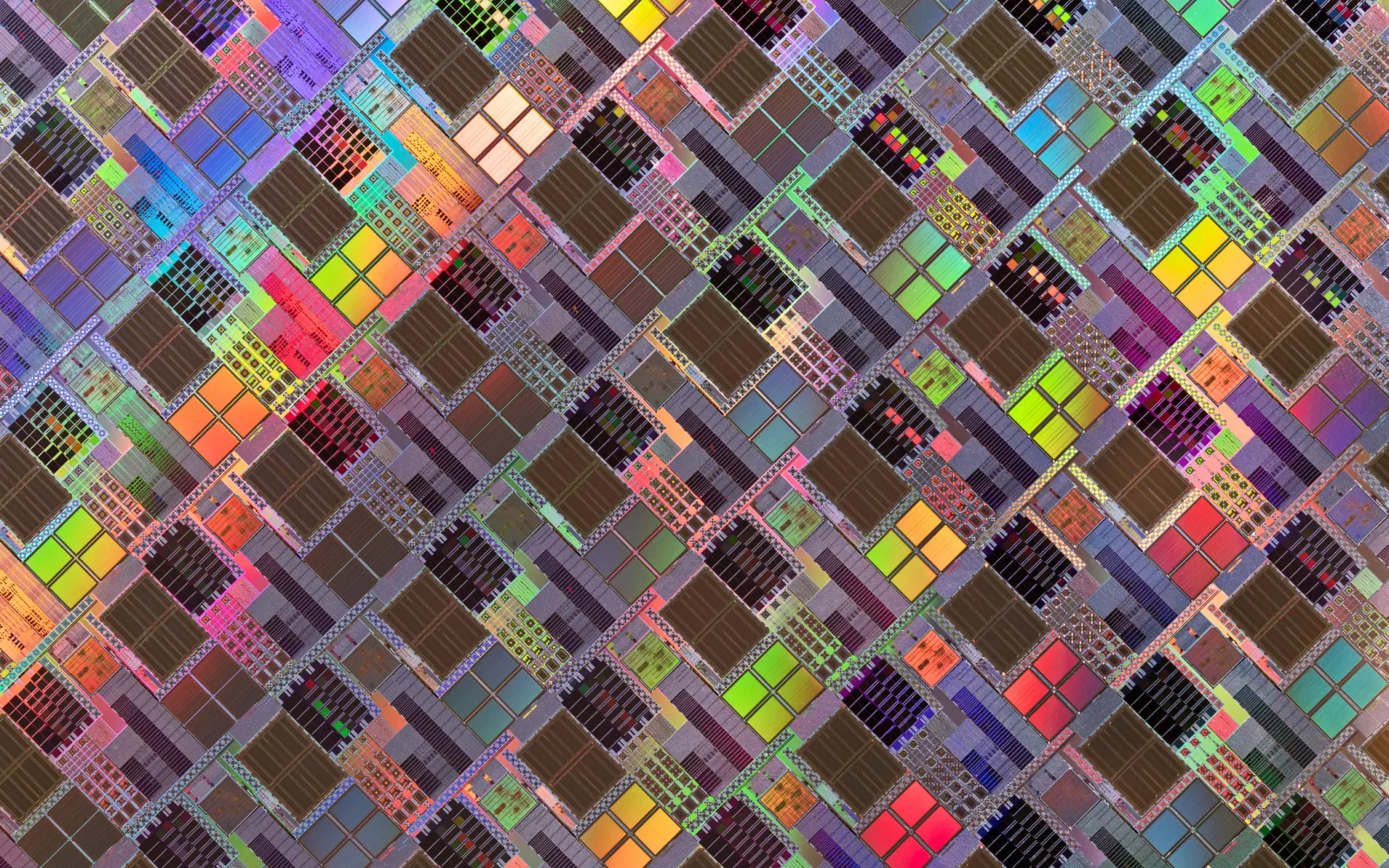

This assumption was already starting to look shaky, and now Iceberg Quantum, a young company out of Sydney, has shown that the runway is even shorter than we thought. With a new preprint that describes its Pinnacle Architecture, Iceberg introduces a novel quantum computing framework that utilizes quantum low-density parity check (QLDPC) codes to enable factoring of 2048-bit RSA integers with fewer than 100,000 physical qubits under realistic conditions, a more than order-of-magnitude improvement over the current state-of-the-art.

Supporting researchers and entrepreneurs attacking these kinds of foundational deep tech problems is what DCVC prides itself on, which is why we’re also thrilled to announce our participation in Iceberg’s $6 million seed round. This funding will enable the company to accelerate its fault-tolerant architecture development, expand its team and hardware partnerships, and establish a presence in the US.

The news was covered by Quantum Insider (here and here) and Quantum Computing Report (here).

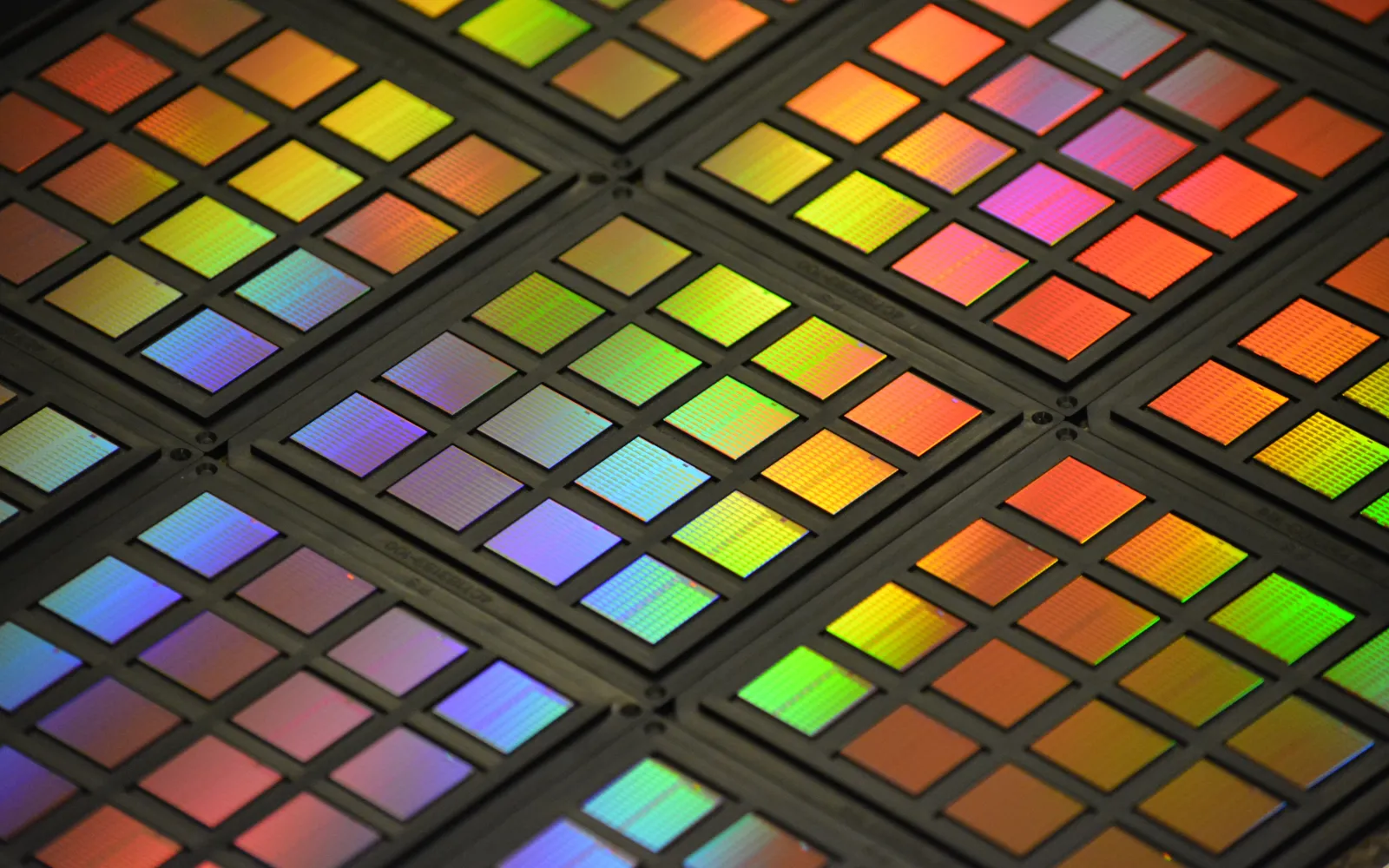

Today’s quantum processors, subject to quantum decoherence, exhibit error rates of roughly 1 in 100 or 1 in 1,000 operations. This creates a significant gap, as practical quantum problems require executing hundreds of millions or billions of quantum gates. Bridging this gap and achieving FTQC involves encoding logical information across a collection of physical qubits using error-correcting codes. If the hardware error rate stays below a certain fault-tolerance threshold, we can theoretically suppress errors to any level by increasing the “code distance,” which is the minimum number of errors not detected by the code. However, in practice this comes with a massive hardware overhead.

Low-overhead architectures seek to minimize the number of physical qubits required by two primary methods: One is through the use of cat qubits, a hardware-level design where bit-flip errors are exponentially suppressed by the physical architecture itself. Although progress has been made here, the engineering challenges are still formidable. Another option is to employ sophisticated mathematical codes to achieve correction. Surface codes are currently the most practical experimental choice due to their high error threshold, but this approach is unfortunately highly inefficient, and you typically need 100 to 1,000 physical qubits just to protect a single logical qubit. This poor encoding rate is exactly what Iceberg’s QLDPC codes improve upon; they are exquisitely designed in order to maintain high protection while significantly increasing the number of logical qubits per physical qubit, making large-scale systems more feasible to build.

And cryptography is far from the only application. In its paper Iceberg has also demonstrated an order-of-magnitude reduction in physical qubit requirements for determining ground-state energy in the Fermi-Hubbard model, a foundational framework in condensed matter physics where obtaining full solutions in most contexts is impossible with classical compute. Quantum simulations based on efficient architectures like Pinnacle, however, may lead us to a better understanding of phenomena like electron coupling in cuprates, extremely promising materials that conduct electricity with zero resistance at temperatures much higher than standard superconductors. The knowledge gained through simulations could serve as a blueprint to designing room-temperature superconductors, revolutionizing everything from power grids to maglev transportation.

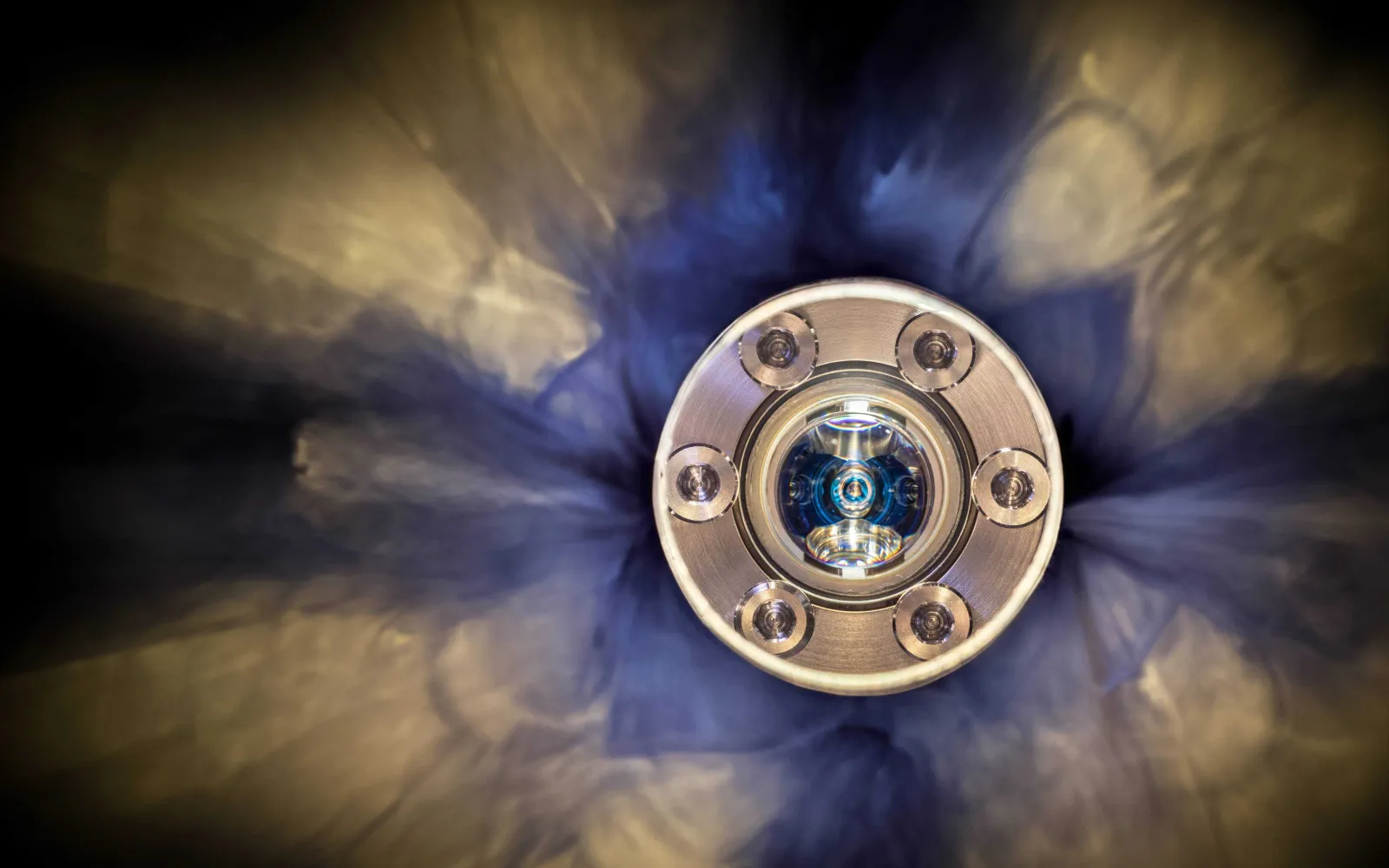

Of course, much hard work remains before fault tolerant quantum computing is here and the new approach realizes its full potential. But, as with the AI revolution, the convergence of progress in hardware — Iceberg is already working with quantum hardware companies like PsiQuantum (photonic qubits), Diraq (spin qubits), and IonQ (trapped ions) — and the right architectures can accelerate the field and allow for advances that only yesterday would have seemed like science fiction. Before we see the advent of practical large-scale quantum computation, we need a way to efficiently deal with errors caused by decoherence. Iceberg now has a framework that does just that.